Many people are at a large risk of a phishing attack. In this scenario the person may receive an email that looks like it came from a legitimate source (e.g. their bank) and encourages them to click a link that presents them with their bank login page. The user then attempts to login…

Except that site isn’t their banking site. It’s a mockup that looks like the real one. And they’ve now told their banking password to the attacker.

A variant on this, called Spear Phishing, is specifically targetted at a company; e.g. the website might look like an intranet login page, and now the attacker has gained credentials to the company network; a situation made worse by SSO solutions; that password may be the same password used to login to your Windows desktop!

Related to this (and playing into this) is Typo Squatting; the bad website might be called paypale.com,

for example. Or paipal.com or paypa1.com or… the ways the

URL can be made to look similar to the original are unlimited. They

need not even be that smart; paypal_com.example.com?

SSL as a mitigation… or not!

In the early days of SSL it was very hard to get a certificate; you had to prove to the Certificate Authority (CA) who you were, and there were checks in place to prevent bad certificates from being issued. We also wanted to encourage people to only enter sensitive data, such as credit card numbers, to an SSL encrypted site. So we taught people to look for the “green padlock”. If there was no padlock then it is not safe.

And this kinda worked, for a while. The difficulty in getting a cert meant that a green padlock was a level of trust. And so this advice mutated and started to be understood as “green padlock means it is safe”. Which is not quite the same thing.

With automated free certificates, such as those

from LetsEncrypt I could request and get

a certificate for www.paypal.com.sweharris.org. In this scenario

a phisher could send potential victimes to a site that has the green

padlock.

People learned the wrong lesson from the green padlock.

Now there are some things we can do to help teach people (many companies use services such as PhishMe to run training and test scenarios. The use of Extended Validation Certificates can help highlight good sites. And, of course, teach people to not click links and carefully inspect URLs before entering data.

But even the best of people make mistakes. I may have been phished once, but the second after I realised this I changed the password on the account. If it can happen to me then it can happen to anyone.

Ideally, of course, CAs wouldn’t release certs for well known domains

(what legitimate reason do I have for paypal.com.sweharris.org?)

but given the sheer number of sites in question (banks, other financial

institutions, government sites, commerce sites…) and the typosquatting

and obfuscation problem, this is not really practical.

The consequence of making it easy for the ‘good guys’ to get SSL certs means that it’s also easy for the bad guys to get them as well.

So what can companies do to protect their customers?

Certificate Transparency

Certificate Transparency (CT) came about as a result of a number

of errors made by CAs, such as mistakenly providing a cert for

a domain they shouldn’t have done, or a hacker compromising a CA

partner to gain certs

for google.com and similar.

With CT every certificate issued is logged to a number of log services by the CA, and those logs are open to the public.

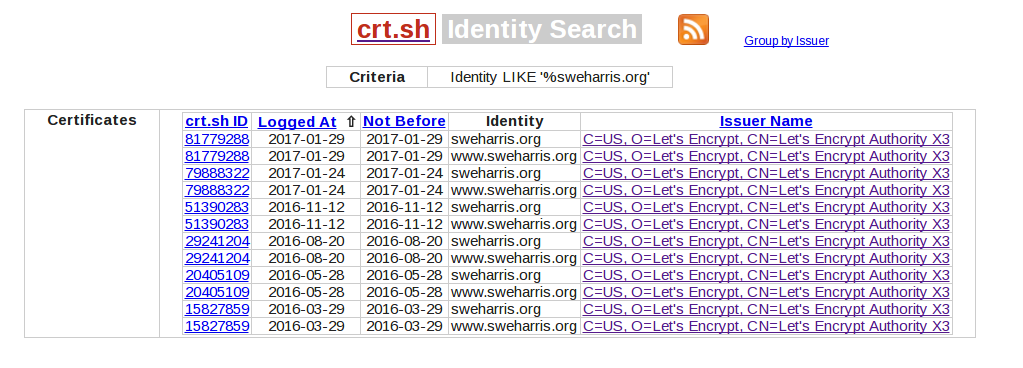

One easy to view site is crt.sh. This is an easy to use service. You can enter the domain name you care about and get a list of all the certs issued.

For example, the certificates for this site:

Hmm, interesting… why did I request a new cert on 2017/1/29, just 5 days after the previous one? Digging deeper I can see that’s because I added this site as a SAN entry to my default (non-SNI aware) cert.

Automation

Of course these sorts of logs are fine for “eyes on glass” monitoring but larger companies don’t want manual processing; automation is the key. Fortunately there are APIs available as well. Symantec run a log that produces nice JSON output that is easy to call.

eg the call for this site would be:

https://cryptoreport.websecurity.symantec.com/chainTester/webservice/ctsearch/search?keyword=sweharris.org

A typical entry might look like

{

"documentId": "q1SpPQpqDh6nQoKQW5sl5CjPNbwBlMY6F7LE1K7vakQ=",

"serialNumber": "03483b8d546d95fe3c1726b5f68eb5b9533a",

"commonName": "www.sweharris.org",

"notAfter": "2017-Feb-10",

"notBefore": "2016-Nov-12",

"organization": "",

"issuerCommonName": "Let's Encrypt Authority X3",

"issuerOrganization": "Let's Encrypt",

"subjectAlternativeNames": [

"sweharris.org",

"www.sweharris.org"

],

"issuer": "Let's Encrypt Authority X3"

},

It now becomes possible for a company to automate checking of CT logs and compare them to their request system. Any log entry that shows up that doesn’t match a request can be flagged to the Security Operations Center (SOC) for followup and investigation with the CA.

Companies can also be proactive. They can look for re-use of their

name elsewhere (so Paypal could search for any cert that has paypal

in it). They can also look for common typosquatted variations (although,

as previously noted, this isn’t a complete answer). This can help

mitigate some of the phishing attacks attacks.

Summary

CT logs are one way that a company can protect their customers from a number of phishing attacks. It’s not perfect, but it has benefits.

Enterprise key management solutions may offer the ability to search CT logs as part of their solution; if you’re looking at such a solution then ask the vendor what they do about CT logs, and how integrated it is in their solution. They may even run their own CT log server!

Other tools, such as CAA DNS records (a method of telling CAs which ones are authorised to create keys) and HPKP to pin known good keys into the browser can also help out with rogue certificate issuance (Comodo would refuse to issue a cert for your domain if your CAA records says that only letsencrypt is allowed!) but CT logs are a way of verifying what has been issued.