Container technology, specifically Docker, is becoming an important part of any enterprise. Even if you don’t have development teams targeting Docker you may have a vendor wanting to deliver their software in container form. I’m not so happy with that, but we’re going to have to live with it.

In order to properly control the risk around this I feel it helps to have a feeling for the basics of what a Docker container is, and since I come from a technical background I like to look at it from a technology driven perspective.

This is the first in a series of “Docker basics” that will try to explain the what and the why’s of a Docker container.

Container technology is a form of OS virtualisation

We’re used to virtualisation in the form of VMware or Xen or KVM (or LDOMs on Solaris, or LPARs on AIX).

Tools such as VMware ESX virtualize hardware

- “Here is a virtual Intel server”

- One physical server, multiple virtual servers

- Each virtual server can be different, but needs to run on the Intel platform

- Windows and Linux living together

- Can run most OS’s unchanged

- Can present “virtual” hardware (e.g. network, disk interfaces) that are optimized

- May require special drivers in the guest

On the other hand, container technologies virtualize the OS

- “Here is a virtual Linux instance”

- One Kernel, multiple OS userspaces

- Each OS userspace can be different, but needs to run on the Linux kernel

- Debian and RedHat living together

- Can run everything from init/systemd down to a single process

Docker, Docker, Docker. What is Docker?

Docker can be thought of in a number of different ways

- A container run-time engine

- Uses Linux kernel constructs

- LXC

- Namespaces

- cgroups

- Networking

- Uses Linux kernel constructs

- An application packaging format

- Provides a standard way of deploying an application image

- The “container” contains the code, dependencies, etc inside the image

- Provides a standard way of deploying an application image

- A build engine

- Provides a way of creating the application image

- An ecosystem

- Repositories, tools, images, workflows…

Layers upon layers

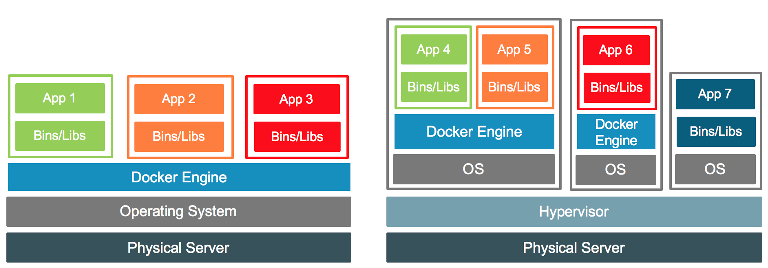

The Docker engine runs as a privileged process in user space, and so sits on top of a running kernel. This means it can run on a VM or on a bare metal OS deployment. Indeed both forms may exist at the same time. In a VM world the physical host may run both containerised and traditional apps inside different VMs.

(Image from https://blog.docker.com/2016/04/containers-and-vms-together/ )

(Image from https://blog.docker.com/2016/04/containers-and-vms-together/ )

The worlds simplest container

Let’s get down to basics and build our first container. First a simple “Hello world” program in C:

% cat hello.c

#include <stdio.h>

#include <stdlib.h>

int main(int argc,char *argv[])

{

printf("Hello, World\n");

}

We compile it static (which we’ll get to in a moment)

% gcc -static -o hello hello.c

To build a Docker image we can use a Dockerfile, which contains the instructions:

% cat Dockerfile

FROM scratch

COPY hello /

CMD ["/hello"]

This file means

- “start from a blank (scratch) image”

- copy the file “hello” into the root directory of the container.

- When the container is started run the command “/hello”.

We can now tell the system to build the container:

% docker build -t hello .

Sending build context to Docker daemon 852.5 kB

Step 1/3 : FROM scratch

--->

Step 2/3 : COPY hello /

---> ff932a2c2088

Removing intermediate container 60fa0962d256

Step 3/3 : CMD /hello

---> Running in a6375caaec44

---> 1cb4c0f3e212

Removing intermediate container a6375caaec44

Successfully built 1cb4c0f3e212

An important note, here, is that the engine does the build (“Sending build context to Docker daemon”). Basically the command will tar up the current directory, send that to the engine, the engine does all the hard work and creates the image. It is not done in your current shell.

We can see the results, and run the container:

% docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

hello latest 1cb4c0f3e212 4 seconds ago 849 kB

% docker run --rm hello

Hello, World

The --rm flag means “clean up after running”. We’ll see more about this

in later blog entries.

What does the container image look like?

So now we’ve built this container, what does it look like?

We can pull the container from the engine and look at it

% mkdir /tmp/container

% cd /tmp/container

% docker save hello | tar xvf -

1cb4c0f3e212f5abfb4b0b74d36a6ace8b7fad164b3e460d8cbf7fb1c3905270.json

59aef640ce511eadb6169072a6d8cefd95a3d1b14b73b92bd86fcb7e0b67618e/

59aef640ce511eadb6169072a6d8cefd95a3d1b14b73b92bd86fcb7e0b67618e/VERSION

59aef640ce511eadb6169072a6d8cefd95a3d1b14b73b92bd86fcb7e0b67618e/json

59aef640ce511eadb6169072a6d8cefd95a3d1b14b73b92bd86fcb7e0b67618e/layer.tar

manifest.json

tar: manifest.json: implausibly old time stamp 1970-01-01 00:00:00

repositories

tar: repositories: implausibly old time stamp 1970-01-01 00:00:00

The content is in the layer tar file

% tar tf 59aef640ce511eadb6169072a6d8cefd95a3d1b14b73b92bd86fcb7e0b67618e/layer.tar

hello

It’s basically just a tar ball with meta data!

Why did I build that with -static?

In the above example I used -static while compiling. Let’s see

what happens if I don’t do that.

% gcc -o hello hello.c

% docker build -t hello-dynamic .

Sending build context to Docker daemon 12.29 kB

Step 1/3 : FROM scratch

--->

Step 2/3 : COPY hello /

---> b94d1686eec2

Removing intermediate container f5fdec11138e

Step 3/3 : CMD /hello

---> Running in bc887d4cc424

---> 5f13658614ea

Removing intermediate container bc887d4cc424

Successfully built 5f13658614ea

% docker run --rm hello-dynamic

standard_init_linux.go:178: exec user process caused "no such file or directory"

“No such file or directory” isn’t a helpful error message, but experience

with older technologies (chroot environments) reminds me that we need

to include all the libraries we need inside the image as well. Remember,

a container needs to include all the dependencies it needs!

OK, we can work out what is needed, and update our build and Dockerfile

to include what is necessary:

% ldd hello

linux-vdso.so.1 => (0x00007ffca81c9000)

libc.so.6 => /lib64/libc.so.6 (0x00007fa02da4d000)

/lib64/ld-linux-x86-64.so.2 (0x00007fa02de22000)

% mkdir lib64

% cp /lib64/libc.so.6 /lib64/ld-linux-x86-64.so.2 lib64

% cat Dockerfile

FROM scratch

COPY hello /

COPY lib64/ /lib64/

CMD ["/hello"]

If you ever built an “anonymous FTP” chroot then this would feel familiar; all library dependencies need to be inside the container image.

This time the container runs:

% docker build -t hello-dynamic .

Sending build context to Docker daemon 2.288 MB

Step 1/4 : FROM scratch

--->

Step 2/4 : COPY hello /

---> Using cache

---> b94d1686eec2

Step 3/4 : COPY lib64/ /lib64/

---> 280171c871b9

Removing intermediate container 3872e77dd215

Step 4/4 : CMD /hello

---> Running in fe399496bd09

---> 6d706ccc02fc

Removing intermediate container fe399496bd09

Successfully built 6d706ccc02fc

% docker run --rm hello-dynamic

Hello, World

What does this new container look like?

We’d expect to see the 3 files inside the layer file. Let’s look:

% mkdir /tmp/container

% cd /tmp/container

% docker save hello-dynamic | tar xvf -

10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/

10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/VERSION

10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/json

10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/layer.tar

1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/

1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/VERSION

1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/json

1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/layer.tar

6d706ccc02fc6c6ead9b6913b8bd21f15a34abcb09b5deb8e5407af5f4203f14.json

manifest.json

tar: manifest.json: implausibly old time stamp 1970-01-01 00:00:00

repositories

tar: repositories: implausibly old time stamp 1970-01-01 00:00:00

Oh, hey, multiple layers! Let’s look inside each layer:

% tar tf 10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/layer.tar

hello

% tar tf 1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/layer.tar

lib64/

lib64/ld-linux-x86-64.so.2

lib64/libc.so.6

So each COPY command created a new layer to the Docker image, which show up in the manifest file

% jq '.[] | [ .Layers ]' < manifest.json

[

[

"10e6ef63539659eef459b5b0795ad1d91e44b4d3d6b5cf369f026cabbef333f4/layer.tar",

"1f5263a466b60441b1569ae0bf6d059f6a866d721bde8c8e795313116400fb4f/layer.tar"

]

]

This is hard work! What if I wanted perl or php or…?

This working out of dependencies isn’t a good solution. Each library may

have its own set of dependencies, and libraries that are optionally loaded

at run time (e.g. name resolver libraries) won’t get found. So, instead

of starting from scratch we can bring in a base OS image instead:

% cat Dockerfile

FROM debian:latest

COPY hello /

CMD ["/hello"]

The FROM debian:latest line tells the engine to use an image of that

name as the base layer. If the engine doesn’t know about this then

it will reach out to a repository to find and pull the image (default:

docker.io). This is done by the engine and the engine can be configured

to use HTTP proxies (if necessary). So if you don’t explicitly block

stuff then any developer can pull almost anything in.

This layer now becomes the base on which our code gets copied. The

build now runs pretty much as expected; we can see the engine doing

the pull to get the necessary image.

% docker build -t hello-debian .

Sending build context to Docker daemon 12.29 kB

Step 1/3 : FROM debian:latest

latest: Pulling from library/debian

10a267c67f42: Pull complete

Digest: sha256:476959f29a17423a24a17716e058352ff6fbf13d8389e4a561c8ccc758245937

Status: Downloaded newer image for debian:latest

---> 3e83c23dba6a

Step 2/3 : COPY hello /

---> c76829938202

Removing intermediate container 78158e94a68c

Step 3/3 : CMD /hello

---> Running in 83c90a1cb1d8

---> 740546dadcc9

Removing intermediate container 83c90a1cb1d8

Successfully built 740546dadcc9

% docker run --rm hello-debian

Hello, World

Size and complexity

This convenience doesn’t come for free, of course!

% docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

hello-debian latest 740546dadcc9 3 minutes ago 124 MB

hello-dynamic latest 6d706ccc02fc 17 hours ago 2.28 MB

hello latest 1cb4c0f3e212 17 hours ago 849 kB

debian latest 3e83c23dba6a 3 weeks ago 124 MB

Our “Hello, World” application has grown from 849 Kbytes to a whopping 124Mbytes.

% docker save hello-debian | tar xf -

tar: manifest.json: implausibly old time stamp 1970-01-01 00:00:00

tar: repositories: implausibly old time stamp 1970-01-01 00:00:00

% for a in */layer.tar; do print -n "$a: " ; tar tf $a | wc -l ; done

7a83b1430fe3eaed26ecd9b011dcd885c6f04e45bee3205734fea8ce7382a01e/layer.tar: 1

a1ae6cd0978a7d90aea036f11679f31e1a3142b1f40030fb80d4c8748b0a6a01/layer.tar: 8259

It’s also grown from 1 file to 8,260 files.

But we no longer need to track dependencies. Is this a win? Well, from a developer’s pespective, it most definitely is.

Security concerns

From a security perspective though… your application image now has a complete(ish) copy of Debian inside it. This is hidden from your traditional infrastructure operations team. It may not even use a standard OS used by your organisation! If there’s a patch in the OS layer then the application team are now responsible for patching and deploying the fixed containers.

Will they be tracking these vulnerabilities? How quickly would they have fixed shellshock? Or (in more advanced cases) Apache Struts?

Advanced builds

If we’re pulling in upstream images we can use their packages as well

% cat Dockerfile

FROM centos

RUN yum -y update

RUN yum -y install httpd

CMD ["/usr/sbin/httpd","-DFOREGROUND"]

% docker build -t web-server .

...[stuff happens]...

The RUN command can run any shell script, and creates a new layer. In

this case I created two layers (one for yum update and one for

yum install). I could have done a more complicated script such as

yum update && yum install and only created one layer.

Exercise for those following at home: Extract the image (

docker save) and look at the layers created. Note that data in/var/lib/yumand/var/lib/rpmhas also changed.

But we now have a web server! Let’s give it a file to serve:

% cat web_base/index.html

<html>

<head>Test</head>

<body>

This is a test

</body>

</html>

% docker run --rm -d -p 80:80 -v $PWD/web_base:/var/www/html \

-v /tmp/weblogs:/var/log/httpd web-server

63250d9d48bb784ac59b39d5c0254337384ee67026f27b144e2717ae0fe3b57b

The -v flag maps the local directory to a volume inside the container. In this case we mapped the HTML directory and the apache log directory

This is one way we can pass configuration to a container and get log information out of a container

Notice we didn’t write any special code! This is standard apache running in a container

And it works!

% docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

63250d9d48bb web-server "/usr/sbin/httpd -..." 2 minutes ago Up 2 minutes 0.0.0.0:80->80/tcp modest_shirley

% curl http://localhost

<html>

<head>Test</head>

<body>

This is a test

</body>

</html>

% cat /tmp/weblogs/access_log

172.17.0.1 - - [18/Jun/2017:14:08:59 +0000] "GET / HTTP/1.1" 200 63 "-" "curl/7.29.0"

In the ps command we can see the port mapping.

The name is randomly generated, but can be set by the docker run comamnd line

Summary

In this blog we’ve taken a basic look at:

- The Docker engine

- How to build a simple container

- What the container looks like

- Library dependencies

- Layers

- Pulling in external images

- Running almost anything at build time (“yum”)

- Mapping local directories into the container

- Configuration files, log files

We’ve also seen some security concerns around developers being able to pull random files from anywhere into these containers, and it being an opaque box to infrastructure operate.

We can start to see some of the risks in running Docker instances. These also apply to vendor provided containers, and for similar reasons. The vendor becomes responsible for patching the contents of the container, which should mean more frequent releases (they not only need to fix their application, they also need to fix the OS). Do they? Will your teams have the time needed to run these new containers through test/qa/prod release cycles?

In later blog entries I’ll start to look inside a running container and look at some ways we can detect intrusion and protect against it.