I’ve spent the past far-too-many years working in the finance industry, in mega-banks and card processors. These companies are traditionally very worried about information security. It’s not to say they always do it well (everyone makes a mistake), but it leads to a conservative attitude.

These types of companies end up creating a massive set of standards and procedures to protect themselves. “Thou MUST do this. Thou MUST do that.” Any deviance from the norm (in a weaker situation) is a risk and needs documenting and tracking.

There’s a number of assumptions involved in these standards. For example, the physical disk is in our datacenter; the SAs with logical access have gone through corporate vetting; the physical access controls to datacenters are known; network connectivity is known. At a higher layer, all internet facing services have been signed off (firewalls need to be opened to allow incoming connections); 3 tier architectures are defined and maintained; data loss prevention tools exist. In essence we’ve built both physical and logical barriers to control ingress and egress of data. You might even hear phrases such as “within the company’s four walls” to refer to this.

Now this scenario does assume staff can be trusted; there’s a whole another set of controls and processes to protect against that, but that’s kinda out of scope of this blog posting.

How does the cloud change this?

Before we can answer this question we need to consider what is meant by cloud. NIST defines cloud in terms of three primary services; IaaS (Infrastructure as a Service), PaaS (Platform as..) and SaaS (Software…). These really are rough demarcation points because a cloud engagement may include components of all of these (e.g. a Big Data offering is a SaaS, but it may offer “compute VMs” where you can install your own software… an IaaS).

I’m not going to consider SaaS here because, in essence, this isn’t really much different to traditional service outsourcing. HR departments have outsourced to specialist providers for decades; we’re now seeing this at the IT technology layer. You put your data into someone else’s software, run on their machines, and trust it. Where things have changed is that your outsourced provider now may, themselves, run on an IaaS. To trust the SaaS provider you may need to know their dependencies and the underlying IaaS provider.

Similarly with a PaaS; now you throw your software into someone else’s compute environment… which may run on an IaaS.

In both the SaaS and PaaS model we have to worry about controls at the service layer level, and potentially controls at the IaaS layer.

Which leads us to IaaS solutions. The worry, here, is commonly around the management of the physical infrastructure.

Sure, there’s a challenge around using Cloud Service Providers (CSPs) such as Amazon, simply because they provide so many services and tools. But we can work on that. We can define standards. We can build logical constructs that compare to the traditional four-wall security model.

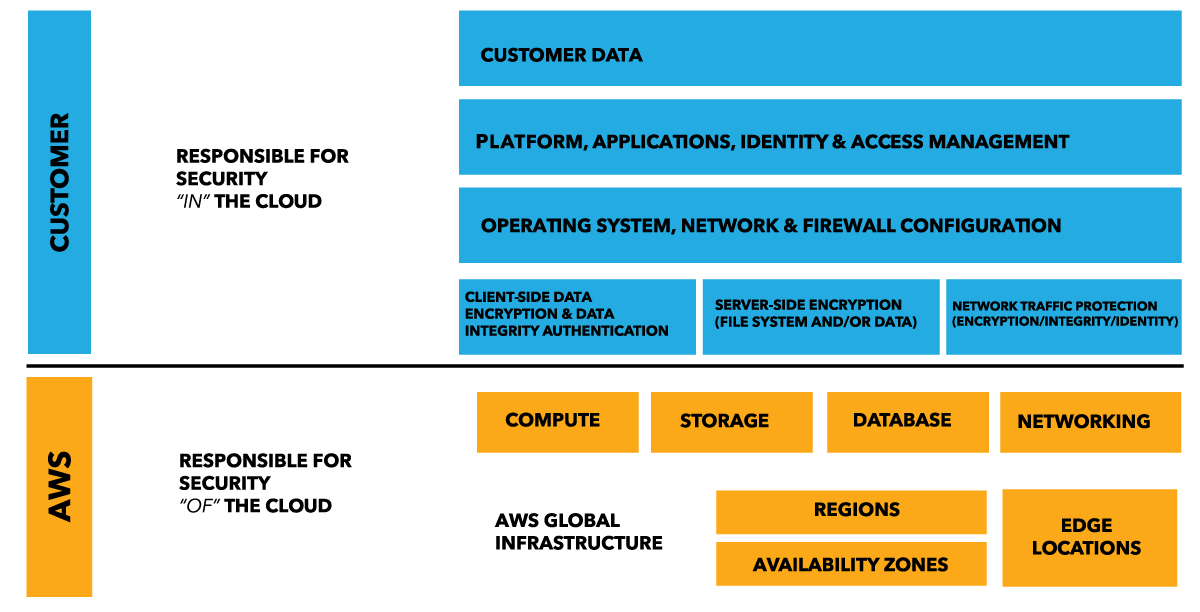

But we’ve still got the lower layers. This is what Amazon called the Shared Responsibility Model.

Effectively, the CSP has replaced your Datacenter Operate team and portion of your SA team. And these are people you don’t know; they’ve not been through your corporate vetting process, had their fingerprint taken, peed in a cup for drug testing… how can you trust these guys?

The shared responsibility model also applies for PaaS and SaaS offerings; just the line of responsibility moves upwards. I like to call this “above the line” and “below the line”. The CSP is responsible for stuff below the line; you’re responsible for stuff above the line. Where that line is drawn is unique to each engagement and CSP.

Mitigations

The first thing we do is try to limit our exposure. Encrypt things where you can. Replicate as far as is required your existing controls (it’ll be a new implementation but you need firewalls, DLP, logging…). Determine what those controls really are, rather than just the decade old “this is how we’ve always done it”.

There are some cloud specific new stuff; always encrypt data at rest (block store, S3, databases). Automate detection of misconfiguration (an open S3 bucket? That’s not right! Open egress from your VM to the internet? Huh, that bypasses DLP!). Can your application work purely on encrypted data, and never need to see the plain text?

But at the end of the day we need to have a level of trust in the CSP. What’s to stop them snooping memory from the hypervisor? How far do we trust them to run the “below the line” stuff properly?

What can we about that?

Trust

Here’s where things start to get a little fuzzy. We no longer have direct visibility into these operations. It’s all below the line, and so an opaque box.

But, really, this isn’t much different to any other technology outsourcing contract. The more I’ve delved into “cloud” the more I’ve realised that we already have (or should have!) processes in place to handle this. Far too frequently I hear people discuss the complexities of a solution in the cloud and I ask “How does cloud change this?”. Most of the time it doesn’t.

So what does cloud change? Staffing is an obvious one; people have access to physical machines that didn’t go through your vetting process. Access management is another; these staff don’t authenticate via your corporate systems. Patch management? Inventory management? Physical security? The list of things ‘below the line’ goes on and on.

Which is where we have to start to trust, but have a level of verification. There are now a number of certifications that companies can attain to help you gain confidence in the opaque box. The primary one is the SOC2 report, which is part of SSAE18 and replaces the older SAS70 reports.

The SOC reports can be used as a baseline and audited by a trusted auditor. You don’t want a SOC report from “Joe’s bait and audit shop”! A properly performed audit can help provide assurance that many of the below the line controls a company would provide on-premise are also being met by the CSP in the cloud. You may also be interested in the SOC1 and SOC3 reports; all in all they provide a pretty comprehensive picture of the control state of a CSP.

Similarly, a CSP that claims to be Payment Card Industry (PCI) compliant will have additional audits via a QSA. It’s important to note the limitations of these certifications, though; a CSP like Amazon have over a hundred services but not all of them are PCI certified.

Summary

Personally, I’ve been using virtual machines from providers for over a decade (my linode was first deployed in 2004). But I’m not processing credit cards or bank accounts so I’m not a big risk. As long as I avoid being an idiot then my biggest opponent is a script; who wants to break into me? (No no no; that’s not an invitation!!)

But a bank? Ah, that’s a different matter entirely. They have data people want; they’re an attraction. Delegating control of some of the base layers is scary.

It all boils down to how much you can trust your CSP, and how much you can verify that trust. SOC reports, COAs, contractual obligations and insurance can all help with this verification and indemnity.

10 years ago I would not have placed critical processing in the cloud but, today, I think things have matured enough that PCI data can be handled there securely.

If you look at most of the recent “cloud based” data breaches they’ve not been at the CSP layer of the shared responsibility model, it’s always been at the customer layer; most frequently an open S3 bucket, but also open unauthenticated mongoDBs, and similar.

Yes, there is a risk your CSP might be broken into… but this is a micro-hole of a risk compared to the tank-sized hole you’re unwittingly building!

Manage the risk accordingly.