In many organisations an automated scan of an application is done before it’s allowed to “go live”, especially if the app is external facing.

There are typically two types of scan:

- Static Scan

- Dynamic Scan

Static scan

A static scan is commonly a source code scan. It will analyse code

for many common failure modes. If you’re writing C code then it’ll

flag on common buffer overflow patterns. If you’re writing Java with

database connectors it’ll flag on common password exposure patterns. This

is a first line of defense. If you can do this quickly enough then it

might even be part of your code commit path; eg a git merge must pass

static scanning before it can be accepted, and can be a triggered

event.

Static scan’s aren’t perfect, of course. Nothing is! It can miss some cases, and can false-positive on other cases (requiring work-arounds). So a static scan isn’t a replacement for a code review (which can also look at the code logic to see if it does the right thing), but is complementary.

Static analysis was one reason for a massive increase in security of opensource projects by pro-actively flagging potential risks.

Dynamic scan

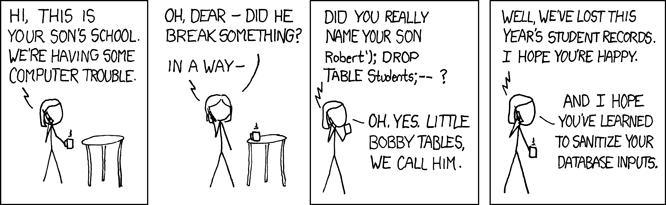

A dynamic scan actually tests the component while it’s running. For a web site (the most common form of publically exposed application) it can automatically discover execution paths and fuzz input fields and try to present bad data. Dynamic scanning can only test the code paths it knows about (or discovers). Stuff hidden behind authentication must be special cased. And it could potentially caused bad behaviour to happen; it could trigger a little Bobby Tables event, so be very careful about scanning production systems!

Common scanning

A common practice is to combine both types of scan. A static scan is typically quick and can be done frequently. A dynamic scan may take hours, and so may not be done every time.

So a common workflow may be:

- Developer codes, tests stuff out in a local instance

- Developer commits Potential static scan here

- “Pull request”

- Code review

- Code merge Static scan here

So far work has been done purely in development; no production code has been pushed. The developer is allowed to develop and test their code with minimal interruption. It’s only when code is “ready” that scanning occurs.

Now depending on your environment and production controls things may get more complication. Let’s assume a “merge” then triggers a “QA” or “UAT” build.

- Merge fires off “Jenkins” process

- Jenkins stands up a test environment

- Static scan occurs

- Merge successful only if the scan is successful.

Depending on the size of complexity of the app this scan could take hours. One advantage to micro-services is that each app has a small footprint and so the scan should be a lot quicker.

It’s important for a developer to not try and hide code paths. “Oh this scanning tool stops me from working, so I’ll hide stuff so it never sees it”… We all have the desire to do things quicker and know we’re smarter than “the system”, but going down this path will lead to bugs.

Side bar: There’s the standard open-source comment; “with sufficient eyes, all bugs are shallow”. There’s a corollary to this; “with sufficient people using your program all bugs will be exploited”.

Scanning this site and false positives

Now this site is pretty simple. I use Hugo to generate static web pages. That means there’s no CGI to be exploited; no database connections to be abuse. It’s plain and simple.

But does that mean a scan of the site would be clean? Is the Apache server configured correctly? Have I added some bad CGI to the static area of the site? Have I made another mistake somewhere?

The Kali Linux distribution includes a tool called Nikto (no bonus points for knowing where the name comes from). This is a web scanner that can check for a few thousand known issues on a web server. It’s not a full host scan (doesn’t check for other open ports, for example).

WARNING: Nikto is not a stealth tool; if you use this against someone’s site then they WILL know.

Given how my site is built, I wouldn’t expect it to find any issues.

Let’s see!

+ Server: Apache

+ Server leaks inodes via ETags, header found with file /, fields: 0x44af 0x533833f94c500

+ Multiple index files found: /index.xml, /index.html

+ The Content-Encoding header is set to "deflate" this may mean that the server is vulnerable to the BREACH attack.

+ Allowed HTTP Methods: POST, OPTIONS, GET, HEAD

+ OSVDB-3092: /sitemap.xml: This gives a nice listing of the site content.

+ OSVDB-3268: /icons/: Directory indexing found.

+ OSVDB-3233: /icons/README: Apache default file found.

+ 8328 requests: 0 error(s) and 7 item(s) reported on remote host

Interesting, it found 7 items to flag on.

Except if we look closer, these are not necessarily issues. Is the “inode

number” something we need to care about? I don’t think so. The sitemap.xml

file is deliberately there to allow search engines to find stuff. The

/icons directory is the apache standard… is there a risk to having

this exposed?

So even a “clean” site may have issues according to the scanning tool, even though it really doesn’t. (At least I assume it doesn’t! My evaluation may be wrong…)

It takes a human to filter out these false positives. If you’re going to put this as part of your CI/CD pipeline then make sure you’ve filtered out the noise first!

Summary

Static and Dynamic scanning tools are complementary. They both have strengths and weaknesses. Both should be used. Even if your code is never exposed to the internet it’s worth doing it, to help protect from the insider threat and also to mitigate against an attacker with a foothold from being able to exploit your server.

They do not replace humans (e.g. code review processes) but can help protect against common failure modes.

ADDITIONAL

2016/10/13 The ever interesting Wolf Goerlich, as part of his “Stuck in traffic” vlog, also points out another limitation of scanning tools; they don’t handle malicious insiders writing bad code (and, by extension, and intruder able to get into the source code repo and make updates). You still need humans in the loop (e.g. code review processes), whether it’s to test for code logic or malicious activities. You should watch Wolf’s vlog.