In my previous post I wrote about some automation of static and dynamic scanning as part of the software delivery pipeline.

However nothing stays the same; we find new vulnerabilities or configurations are broken or stuff previously considered secure is now weak (64bit ciphers can be broken, for example).

So as well as doing your scans during the development cycle we also need to do repeated scans of deployed infrastructure; espcially if it’s externally facing (but internal facing services may still be at risk from the tens of thousands of desktops in your organisation).

In this spirit I decided to scan this server using OpenVAS and that was installed as part of the the Kali Linux build. OpenVAS can also call other tools (eg Nikto, that I mentioned previously).

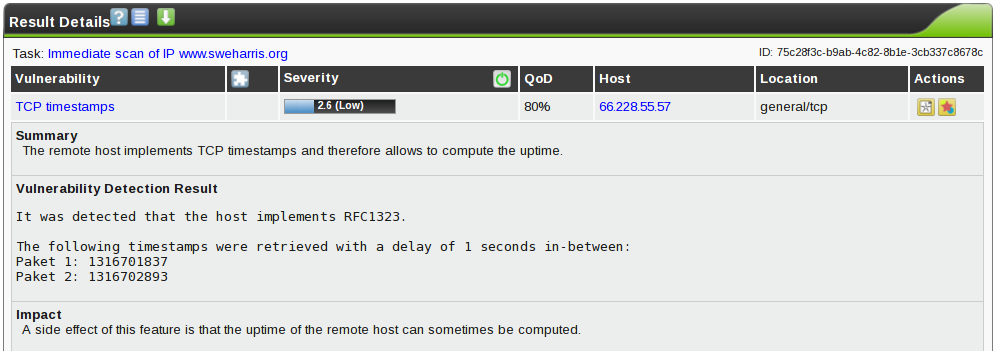

Now I’d done this back in May and got a single “low level” message about TCP timestamps, so I didn’t expect to see anything new.

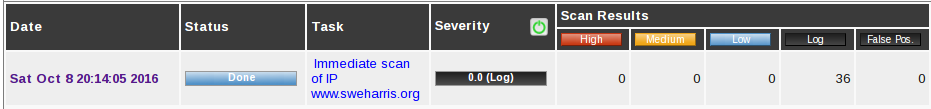

So let’s do a run:

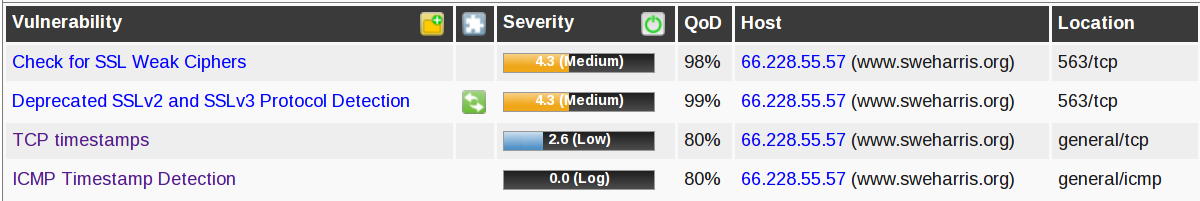

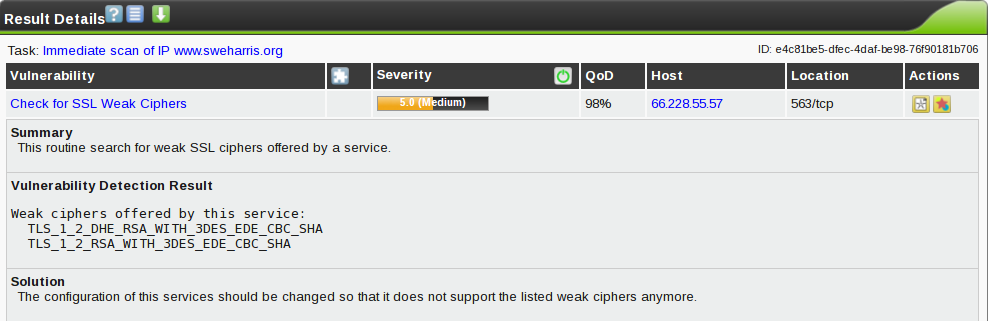

Well, damn! That’s not right! I get an A+ on ssllabs tests (more on that in a later post). What’s going on?

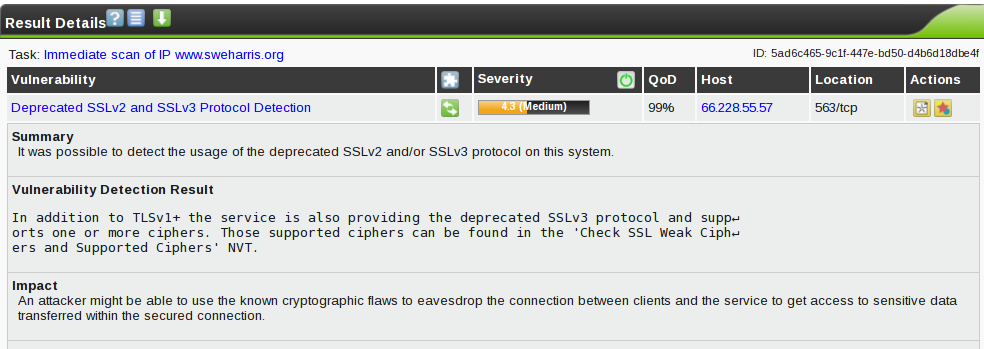

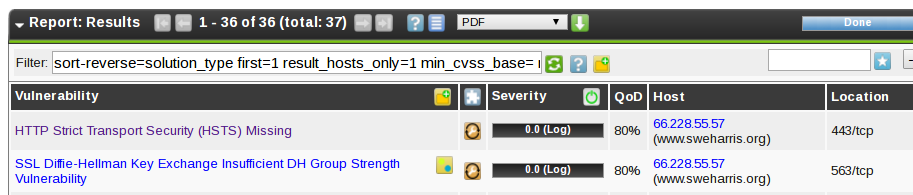

Oh… port 563? That’s NNTPS. Oh! I’d recently installed an SSL news server on this machine! And it seems the defaults aren’t so secure.

So I dig into the manual page to work out how to disable SSLv2, SSLv3 and also to set the cipher suite to match that of my apache server.

At the same time I told OpenVAS to update its libraries of known threats and issues. A scanner with an stale database isn’t really much use, and OpenVAS has an update mechanism, with advisories being fed from DFN-CERT. This is pretty impressive, all the more so that it’s free.

So, problem solved, right?

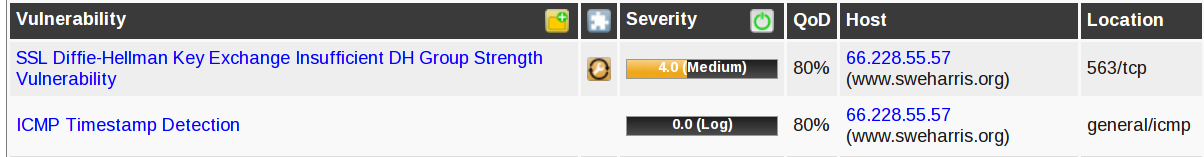

Damnit! Now my web server is also being flagged! And a new DH issue. Interestingly the TCP Timestamp issue has gone away; maybe that was no longer really considered a problem. Evaluations change.

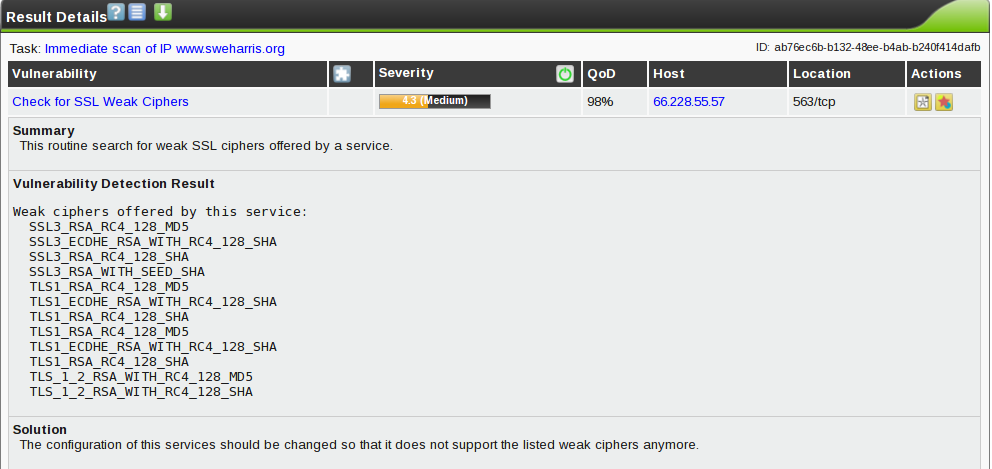

So what is weak…

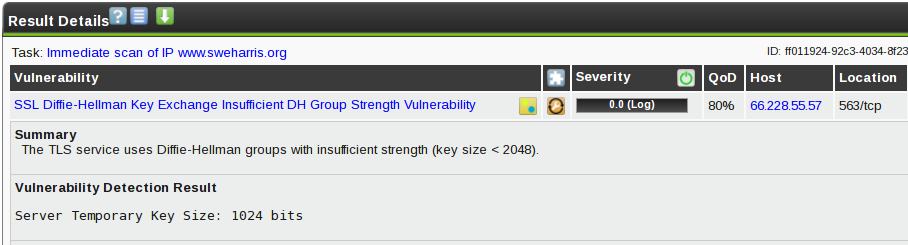

Now this is annoying. The values reported here don’t match the config entries used by OpenSSL.

Are these weak? The nmap -p 443 hostname --script +ssl-enum-ciphers

routine reports those entries in the output and considers them strong.

As we’ve seen, though; evaluations can change. This is why a scanning

tool needs update abilities. I’m more likely to believe the OpenVAS

“weak” report as being newer and more accurate than the builtin nmap

rules that RedHat won’t have updated in forever.

Now my SSL configuration included entries that mentioned DES:

ECDHE-RSA-DES-CBC3-SHA

EDH-RSA-DES-CBC3-SHA

DES-CBC3-SHA

!DES

This was to allow IE6 to talk to my site. But let’s remove the three entries and run again. Same result! Still an error.

Some more digging and it seems that the bad entries were brought in with

the HIGH setting. So now we needed

HIGH

!EDH-RSA-DES-CBC3-SHA

!DES-CBC3-SHA

The ECDHE entry I’d previously removed wasn’t at fault. That’s elliptical

curve. If only the output of the “weak ciphers” test and the configuration

options matched!

Woohoo! Now all the cipher problems have been solved.

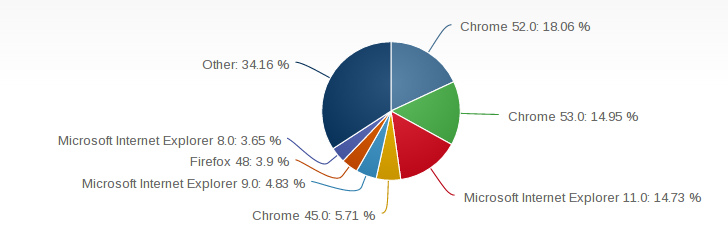

Umm, there’s a downside to this. IE6 and IE8 can no longer reach my site. Do I care? According to a browser market share report IE8 is under 4% of usage. I can live with that.

But what about this DH issue? I looked into the server code and it seems to respond with different key sizes depending on the block size. I’m not sure the code is correct, but I’m not an SSL expert here. However this is a “low value” service; the data transported is passed in clear text on port 119 and the username/passwords used to access this server may also be used in plain text on 119. So I took the decision to “accept the risk” and entered an override for my servers for this issue on this port, reducing the severity to “Log”

The final result is a score of zero! Yay!

Notice those 36 log entries. Now some of these may be worth looking at.

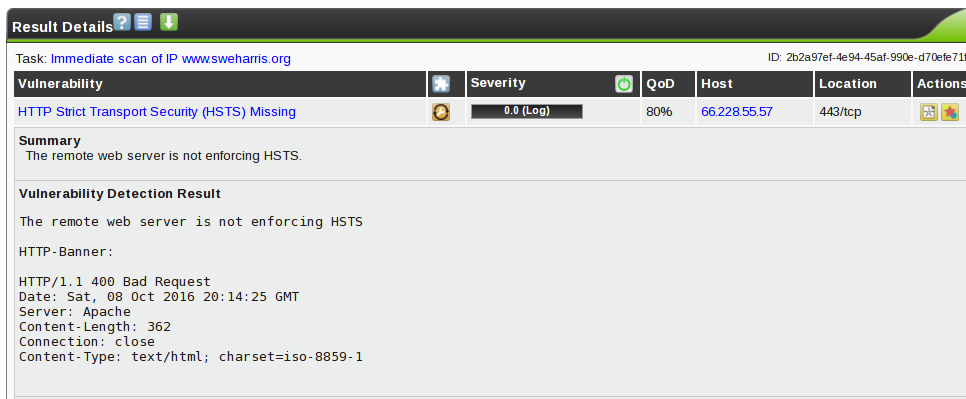

Here’s our override, as a log entry. There’s also an entry claiming HSTS isn’t present. Which is a lie! So let’s look at this:

Ah! This is a response to a ‘bad request’ and it doesn’t see the HSTS header. But a real request does:

$ wget -O /dev/null --server-response https://www.sweharris.org/ 2>&1 | grep Stri

Strict-Transport-Security: max-age=31536000; includeSubdomains

So we can see this is a false positive; I get an A rating for header

security at securityheaders.io.

Lessons Learned

- Keep scanning servers deployed to production; even if the app doesn’t change something else might

- Keep your scanning tool databases up to date!

- Different tools may report different evaluations for the same issue

- Configuration parameters don’t match reported items

- Sometimes you have to accept losing customers (IE8?) to be secure

- Sometimes you can just accept the insecurity risk. That’s why we have “risk management” teams!

OpenVAS is a noisy scan (it flooded my Apache logs with probe attempts, caused multiple ssh failures, triggered firewall protection rules). In an enterprise environment you might want to allow-list the traffic from your scanning servers so they don’t trigger SOC alerts and don’t flood out real alerting traffic. But despite this a full vulnerability scan is useful.

It definitely alerted me to a weakness on my server that I wasn’t aware of!