In previous posts I’ve gone into some detail around how Docker works, and some of the ways we can use and configure it. These have been aimed at technologists who want to use Docker, and for security staff who want to control it.

It was pointed out to me that this doesn’t really help leadership teams. They’re getting shouted at; “We need Docker! We need Docker!”. They don’t have the time (and possibly not the skills) to delve into the low levels the way I have. So I’ve put together this higher level overview of the problems with using Docker.

I jokingly call it “Docker Security for CISOs”, and it’s primarily focused on dealing with vendor supplied applications.

Executive Summary

- Docker is a virtualization technology that encompasses

- Virtual Hosts

- Virtual Networks

- Virtual Storage

- Docker can run any workload

- Web servers, application servers, databases, …

- Docker maturity is approximately where Virtual Machine management was 15 years ago

This last point is quite important; we’ve had well over a decade’s worth of experience in dealing with virtual machines and incorporating them into control environments (CMDB, vulnerability management, configuration management, etc). Most of our tooling just doesn’t fit, conceptually, into a container management system (we’re also seeing issues with elastic compute at the VM level). This leads to an important point:

- Companies need to prioritize creation of a standard technology stack and support model to enable business lines and dev teams to use this.

Container technology is a form of OS Virtualization

Tools such as VMware ESX virtualize hardware

- “Here is a virtual Intel server”

- One physical server, multiple virtual servers

- Each virtual server can be different, but needs to run on the Intel platform

- Windows and Linux living together

- Can run most OS’s unchanged

- Can present “virtual” hardware (e.g. network, disk interfaces) that are optimized

- May require special drivers in the guest

Container technologies virtualize the OS

- “Here is a virtual Linux instance”

- One Kernel, multiple OS user spaces

- Each OS user space can be different, but needs to run on the Linux kernel

- Debian and RedHat living together

- Can run everything from init/systemd down to a single process

Docker, Docker, Docker. What is Docker?

Docker can be looked at in various different ways…

- A container run-time engine

- Uses Linux kernel constructs to segregate workloads

- An application packaging format

- Provides a standard way of deploying an application image

- A build engine

- Provides a way of creating the application image

- An ecosystem

- Repositories, tools, images, workflows, …

Layers upon layers

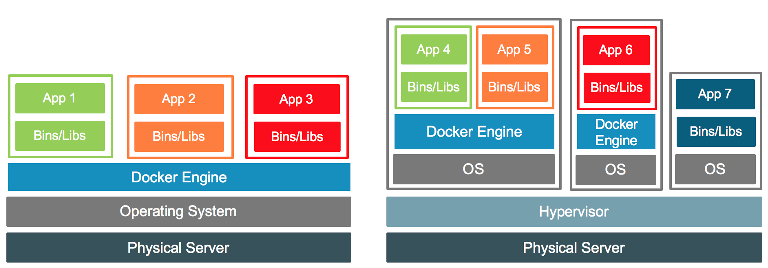

The Docker engine runs as a privileged process in user space, and so sits on top of a running kernel. This means it can run on a VM or on a bare metal OS deployment. Indeed both forms may exist at the same time. In a VM world the physical host may run both containerized and traditional apps inside different VMs.

It’s important to remember that Docker sits on top of an OS (eg RedHat); it’s not “standalone”

(Image from https://blog.docker.com/2016/04/containers-and-vms-together/ )

(Image from https://blog.docker.com/2016/04/containers-and-vms-together/ )

Docker container images

- A Docker image can contain anything from a single file to a complete OS

- Ubuntu, Debian, CentOS, Alpine, …

- This is an opaque box to our existing tooling and processes

- Are there any known vulnerabilities in it?

- What about new vulnerabilities (new CVEs) found after container deployed?

- Our usual agents can not be installed inside the container

- A Docker container should be considered immutable

- Don’t patch a running instance, we destroy it and replace it with a fixed copy

- How often does the vendor update their containers with patches?

- This may result in more pushes to production than a traditional app

- A Docker container should be considered stateless

- Stateful information (configuration files, log files, data files…) live outside the container

This impacts how we manage our container environment; so many of our tools and processes just don’t work!

Docker runtime

“Rogue” containers

docker run debian- We’ve now pulled down a copy of a Debian container from the internet and run it

- Container images could be stored anywhere on the internet

- How do we ensure only approved containers can be run?

Privilege escalation

- Giving people access to the

dockercommand grants them root access inside the container - This can also be used to effectively give them root access to the whole machine

- Access to the machine may allow people to see config files containing passwords or secrets (API keys, SSL certs, etc) needed by the container application

- We may need to consider all interactive access to Docker hosts to be privileged

Image deployment and inventory

- How do we ingest new vendor containers?

- What is the process for going from DEV->SIT/UAT->PROD?

- How do we ensure the image promoted to prod is the same as the one in UAT?

- What is the deployment model required for this vendor application

- Standalone? Compose? Swarm mode? Kubernetes? Something else?

- What networking constructs are created?

- What ports are opened?

- Inventory of containers

- What images have been approved for use?

- What have been denied (e.g. too many CRITICAL vulnerabilities)

- Do we know what containers are running, what version, and where?

- CMDB may be too static, containers may move around a cluster

- Are the containers running that should be running?

- How do we monitor application availability?

- Does the deployment include HA designs?

- What images have been approved for use?

A number of these questions also apply to traditional applications, and can be carried over but I’ve heard senior managers say “it’s a container, just deploy it” bypassing standard code promotion paths.

Some of these questions also apply to virtual appliances (eg applications shipped as an OVA file). Do we have good processes we can adapt, or is this also a gap?

Image integrity and logging

- Transient filesystem changes

- A vulnerable app may allow temporary changes to be made inside the container

- These are lost when the container is restarted

- Could be used as a pivot point to attack the rest of the network

- Could be used to host rogue content (phishing site?)

- Existing File Integrity Monitoring tools can not detect this

- A vulnerable app may allow temporary changes to be made inside the container

- How does the application log activity?

- Each application may do things differently

- How can we get those logs into Splunk?

Exposed services

One application may effectively introduce a handful of new technologies

- A vendor application may consist of multiple containers

- Each container may contain a different open source technology component

- Tomcat, MySQL, MongoDB, Hadoop, ActiveMQ, Memcached, Zookeeper, …

- Each container may contain a different open source technology component

- These services may be exposed onto the main corporate network

- How do we control them?

- SSL?

- Usernames/passwords?

- Encryption of data at rest

- Backups

- How do we control them?

- How do we monitor access to these services?

- Database Access Monitoring

- Usage logs?

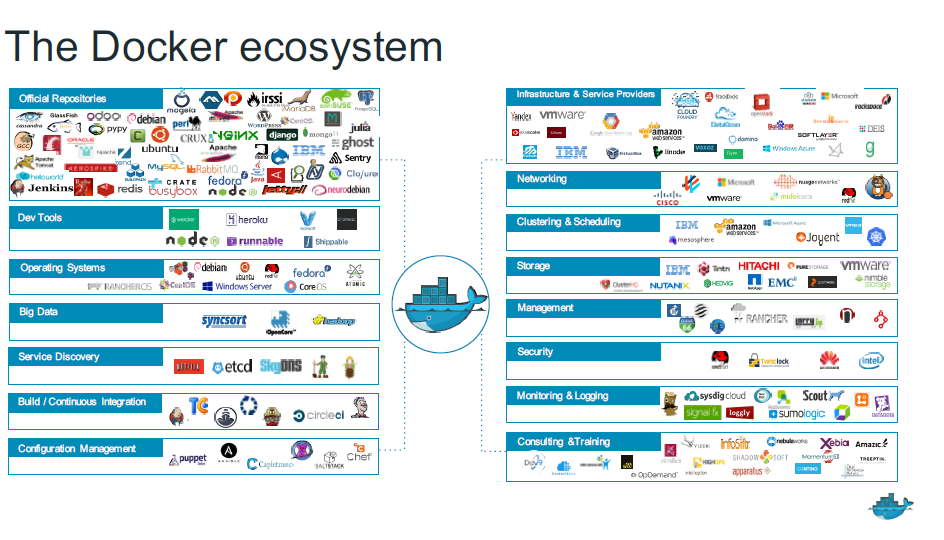

So many options, we can’t support them all

(Source: Docker)

(Source: Docker)

The support model

- Docker becomes a critical core infrastructure component

- As critical as the traditional Hypervisor

- There are “community supported” editions and commercial enterprise editions

- The enterprise edition includes additional functionality not in the community edition

- Different vendors provide different solutions

- VMware provides “vSphere Integrated Containers” (VIC) and additional tooling

- Not 100% Docker compatible (e.g. no Swarm Mode)

- Third party support tools exist (management, security, monitoring, etc)

- Some provide real benefits, others are FUD

- VMware provides “vSphere Integrated Containers” (VIC) and additional tooling

- The organization needs to determine when it can use open source/community supported tools, and how much vendor enterprise level support is required.

- And then build the tooling and processes around it

- We can use this to also provide a target platform for in-house developed Docker based apps

It’s complicated

- Docker was designed to be flexible

- Can host anything from a single process to a complete database

- … or even a whole multi-tier solution!

- We can’t support every possible deployment model

- And let’s not talk about other container ecosystems

- OCI (Open Container Initiative)

- RKT (CoreOS Rocket)

- LXD (Ubuntu)

- OpenVZ

- …

There’s a lot of engineering work that needs to be done!

Summary

This kinda grew a little bit too long for a real CISO deck. It’s maybe 14 pages of powerpoint. But that’s because there’s so much, there!

This isn’t even trying to defend against deliberately bad code (if you can’t trust your vendor, why are you using them?) but against mistakes. Everyone makes them, and this technology is so new.

Just last week I was on a call where the vendor had provided a container with 7 HIGH vulnerabilities in it; we wouldn’t allow a normal app to be deployed that way, so why would we allow this container? It turns out the vendor didn’t have a scanning process in their build pipeline, and so (on my prompting) introduced CLAIR into tooling. Hopefully this will mean less mistakes of this nature and better visibility of new vulnerabilities.

We can’t rely on all vendors doing this, though; we need the tooling inhouse to monitor the environment.